Hello Everyone,

Greetings. I hope you are well. I would like to share some very good news with you.

I recently published a paper with two other co-authors at the IEEE 35th International Symposium on Computer Based Medical Systems (CBMS 2022, July 21-23, 2022, Shenzhen, China, Online Event). CBMS is the premier conference for computer-based medical systems, and one of the main conferences within the fields of medical informatics and biomedical informatics.

The title of my paper is “Exploring LRP and Grad-CAM visualization to interpret multi-label-multi-class pathology prediction using chest radiography“, (Mahbub Ul Alam, Jón Rúnar Baldvinsson and Yuxia Wang)”. In this paper, we tried to explain the decision process of deep neural networks to predict pathology (abnormality) in chest-X ray data using two popular interpretable methods. We investigated whether this explanation matches the clinical diagnosis or not. Interpretability is very crucial and it is emphasized in the recent European Union Artifical Intelligence Act. We hope that this paper will create a positive impact in this aspect.

The paper was received well during the CBMS 2022 symposium presentation time. I am delighted to inform you that the paper received the ‘best student paper award’. The award was provided by the IEEE Technical Committee on Computational Life Science (TCCLS).

I am very honoured and would like to thank DSV for providing me with this opportunity. I am fortunate to be working here to get a second award for my research work. Previously I won the ‘best paper award’ at BIOSTEC HEALTHINF 2020 (you can read more about it here).

Want to know more about the paper? Please check out the following presentation video I made!

Abstract:

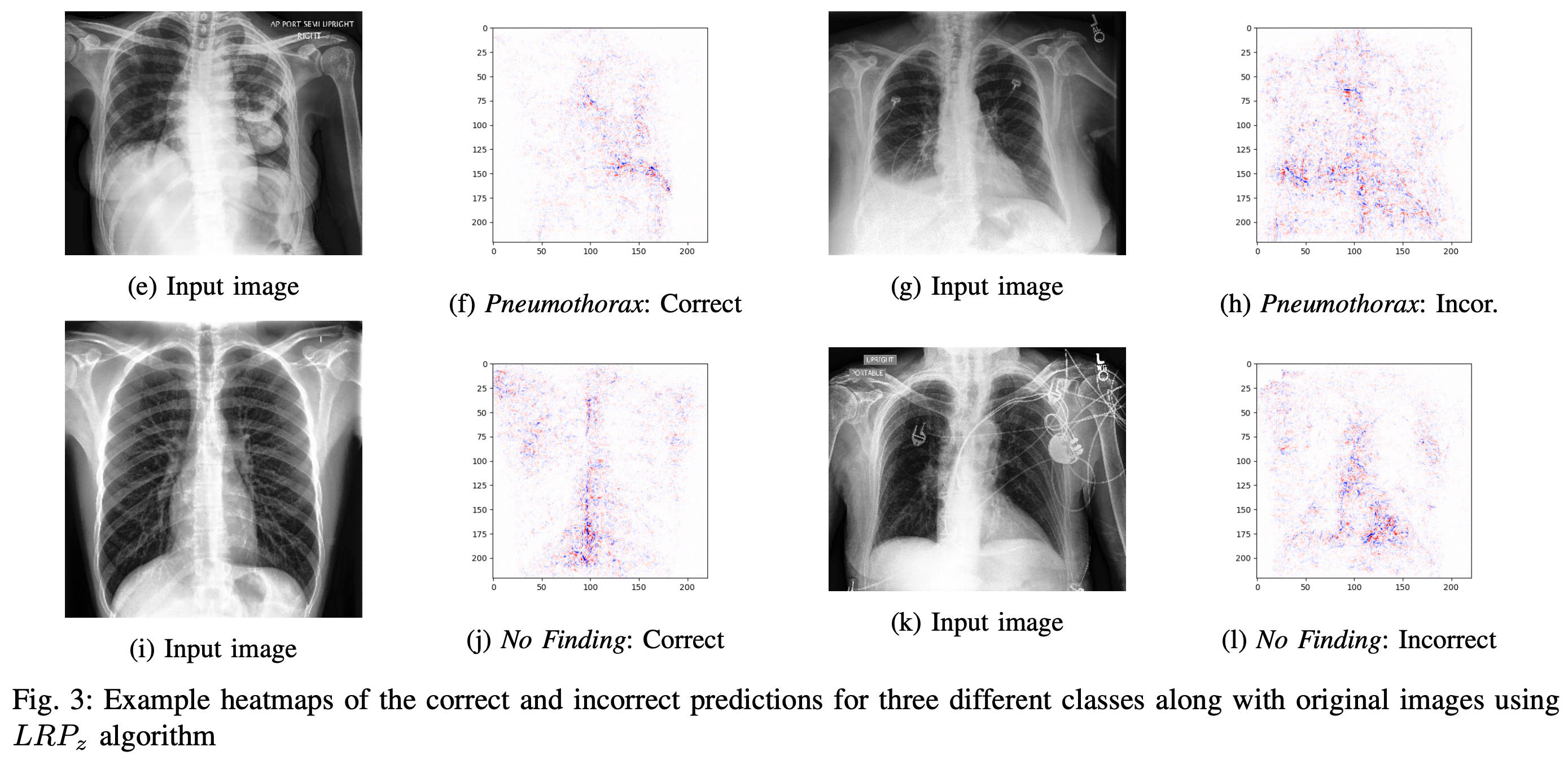

The area of interpretable deep neural networks has received increased attention in recent years due to the need for transparency in various fields, including medicine, healthcare, stock market analysis, compliance with legislation, and law. Layer-wise Relevance Propagation (LRP) and Gradient-weighted Class Activation Mapping (Grad-CAM) are two widely used algorithms to interpret deep neural networks. In this work, we investigated the applicability of these two algorithms in the sensitive application area of interpreting chest radiography images. In order to get a more nuanced and balanced outcome, we use a multi-label classification-based dataset and analyze the model prediction by visualizing the outcome of LRP and Grad-CAM on the chest radiography images. The results show that LRP provides more granular heatmaps than Grad-CAM when applied to the CheXpert dataset classification model. We posit that this is due to the inherent construction difference of these algorithms (LRP is layer-wise accumulation, whereas Grad-CAM focuses primarily on the final sections in the model’s architecture). Both can be useful for understanding the classification from a micro or macro level to get a superior and interpretable clinical decision support system.